You can find plenty of instructions on the interwebs for setting up time machine to a network share, even a Windows share.

What you can't easily find is, how to do it reliably.

I have some recommendations.

1. Use cron scripts to just-in-time mount and dismount the time machine share

This is my first and biggest point. Dismounting the backup drive after each backup removes most of the reliability problem of network backup. Before doing this, I rarely got through 3 months without some kind of “the network share got dismounted uncleanly and now it won't mount until I run Disk First Aid on it”.

2. When it comes to data security, if you don't have 3 copies you aren't being serious.

This is a lesson you can take from cloud computing. Both Microsoft and Amazon clouds treat 'at least 3 copies' as the basic level for storing data. That means you want at least 2 independent backup systems for anything on your own machine.

If you combine this thought with the standard “don't put all your eggs in one physical location” motto of backup, you realise that you need a cloud or offsite backup as well as your time machine backup. The simplest free solution for your third copy, if 5GB is enough, is to use iDrive or OneDrive.

3. Buy a copy of Alsoft Disk Warrior

This is optional, and certainly less important than the first two points but, running Disk First Aid or fsck doesn't always work. Sad but true. I typically got a “fsck can't repair it properly” incident about once a year. I had a growing stack of hard disks with a year's worth of backup each, all only mountable readonly.

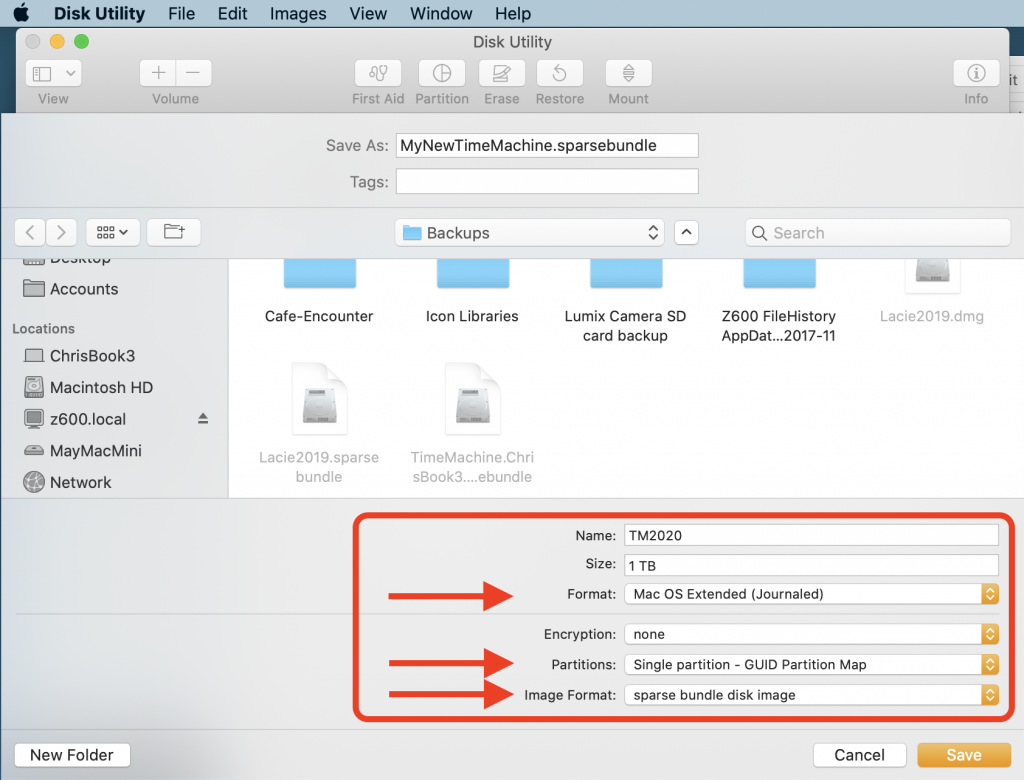

DiskWarrior has so far been reliable in restoring broken volumes back to fully working state. NB as of 2021 DiskWarrior can't yet repair APFS volumes so stay with HFS+ volumes for your time machines.

Help with cron scripts and multiple backups

Cron Scripts

Here are my script and cron table for mounting a TM drive from the network, requesting a backup, and dismounting the TM drive. It uses wakeonlan to wake the server from sleep, and ping to confirm it's up before trying to mount. It uses osascript to mount the volume because that deals with saving the network password in your keychain.

#! /usr/bin/env sh

#

# ------------------------------------------------------

# defineNamesAndPaths

smbServer=myServerName

smbServerfqdn=$smbServer.local

smbServerMacAddress='your-server-mac-address-here'

smbVolumeUrl="smb://$smbServerfqdn/D"

tmDiskImageName='TM2023.sparsebundle'

tmVolumeMountedAtPath='/Volumes/Time Machine Backups'

mountedAtMaybePath1="/Volumes/$smbServerfqdn/Backups"

mountedAtMaybePath2="/Volumes/D/Backups"

mountedAtMaybePath3=$(dirname $(find /Volumes -iname $tmDiskImageName -maxdepth 3 2>/dev/null | head -n 1) 2>/dev/null)

declare -a maybeMountedPaths=("$mountedAtMaybePath1/$tmDiskImageName" "$mountedAtMaybePath2/$tmDiskImageName" "$mountedAtMaybePath3/$tmDiskImageName")

#-------------------------------------------------------

# helpAndExit

if [[ "$1" == -h || "$1" == *help* ]] ; then

echo "$0

mount a time machine backup diskimage from the network and kick off a backup.

-unmount : unmount the time machine volume.

-fsck : attach the volume with -nomount and run fsck

Current settings:

Attach from : $smbVolumeUrl/$tmDiskImageName

Mount at : $tmVolumeMountedAtPath

This script is admin-editable. Edit the script to set smbServer url

and mac address, the diskimage name, and the mounted path.

These are the current settings in $0 :

smbServer=$smbServer

smbServerfqdn=$smbServerfqdn #Used for ping

smbServerMacAddress=$smbServerMacAddress #Used for wakeonlan if available

smbVolumeUrl=$smbVolumeUrl

mountedAtMaybePath1=$mountedAtMaybePath1

mountedAtMaybePath2=$mountedAtMaybePath2

tmDiskImageName=$tmDiskImageName

tmVolumeMountedAtPath=$tmVolumeMountedAtPath

"

exit

fi

#-----------------------------------------------------------

# cron jobs get a very truncated path and can't find ping, diskutil, hdiutil, tmutil ...

PATH="$PATH:/sbin:/usr/sbin:/usr/bin:/usr/local/bin"

echo '#-------------------------------------------------------'

date

echo "$0 $@"

#-------------------------------------------------------

# unmountAndExitIfRequested

if [[ "$1" == *unmount* ]] ; then

tmutil status

if [[ ! -d "$tmVolumeMountedAtPath" ]] ; then

echo "$tmVolumeMountedAtPath is already unmounted"

elif [ -n "$(tmutil status | grep 'Running = 0')" ] ; then

echo "unmounting ..."

/usr/sbin/diskutil unmount "$tmVolumeMountedAtPath"

else

echo "not unmounting $tmVolumeMountedAtPath because tm status says still running."

fi

exit

fi

# ---

#-------------------------------------------------------

# wakeAndConfirmPingableElseExit

#

# wakeonlan : I got mine from brew install – https://github.com/jpoliv/wakeonlan/blob/master/wakeonlan

# Otherwise try https://ddg.gg/bash%20script%20wakeonlan

if [[ -x $(which wakeonlan) ]] ; then wakeonlan $smbServerMacAddress ; fi

for tried in {1..50} ; do ping -c 1 -t 5 $smbServerfqdn 2>&1 && break ; done

if (( $tried == 50 )) ; then

echo "failed to ping $smbServerfqdn. Exiting."

exit

fi

sleep 10

if (( $tried > 2 )) ; then

echo "waiting in case it was a cold-ish start..."

sleep 10

fi

# ---

#-------------------------------------------------------

# mount Network Volume but nomount sparsebundle and run fsck

if [[ "$1" == *fsck* ]] ; then

echo 'mounting ...'

osascript -e 'mount volume "'$smbVolumeUrl'"'

echo 'Trying locations to attach ...'

ok=0

for tryLocation in "${maybeMountedPaths[@]}"

do

echo trying to mount from $tryLocation …

hdiutil attach $tryLocation -nomount && ok=1 && break

done

if [ $ok -eq 1 ] ; then

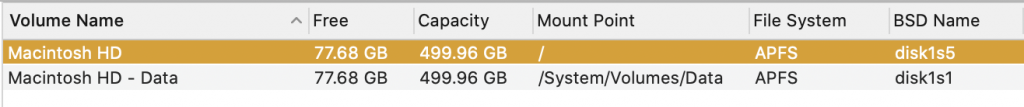

id=$(diskutil list | grep "Time Machine Backups" | grep -oE "disk\d+s\d+")

echo Running fsk on /dev/$id

fsck_hfs -y /dev/$id || exit

fi

fi

# --

#-------------------------------------------------------

# mountAndAttach

echo 'mounting ...'

osascript -e 'mount volume "'$smbVolumeUrl'"'

echo 'Trying locations to attach ...'

ok=0

for tryLocation in "${maybeMountedPaths[@]}"

do

if [ -d $tryLocation ] ; then

echo trying to mount from $tryLocation …

hdiutil attach $tryLocation && ok=1 && echo "attached OK" && break

else

echo not found at $tryLocation

fi

done

[ $ok -eq 0 ] && \

echo "failed to mount $tmDiskImageName at $mountedAtMaybePath1 or $mountedAtMaybePath2 or $mountedAtMaybePath3"

# ---

#-------------------------------------------------------

# requestTimeMachineBackup

echo 'requesting backup.'

tmutil startbackup --auto

echo 'Done.'

# ---And the crontab lines:

5 10-23/4 * * * /Users/chris/Applications/tmbackupnow.sh >> /Users/chris/Applications/Logs/tmbackupnow.log 2>&1

20,35 10-23/4 * * * /Users/chris/Applications/tmbackupnow.sh -unmount >> /Users/chris/Applications/Logs/tmbackupnow.log 2>&1This schedule does 3 or 4 backups per working day on top of the local snapshots that time machine does anyway. Possibly this is overkill if you are squeezed for disk space. It tries to mount the TM machine and kick off a backup at 5 minutes past 10am,2pm,6pm,10pm and then tries to dismount the backup disk 15 minutes later and again another 15 minutes later. Adjust the timing to the size & speed of your backup.

At least 3 copies

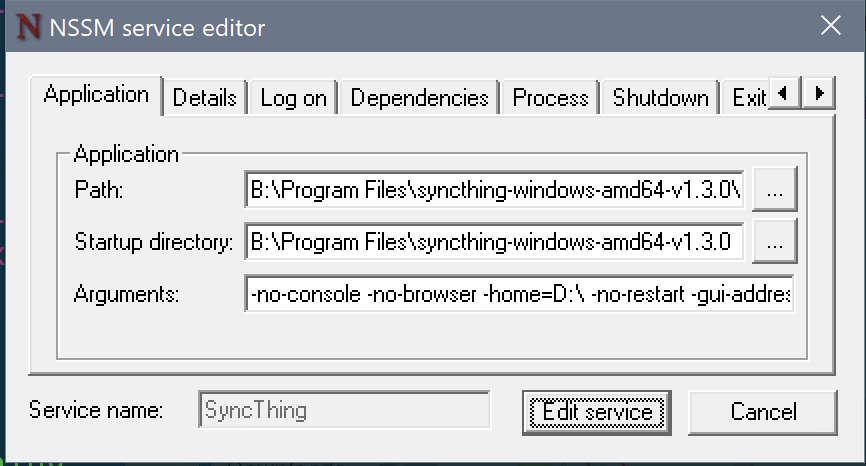

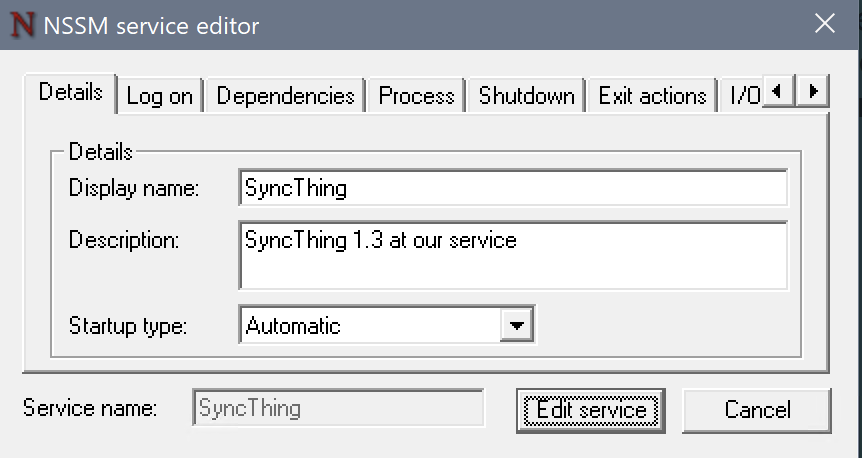

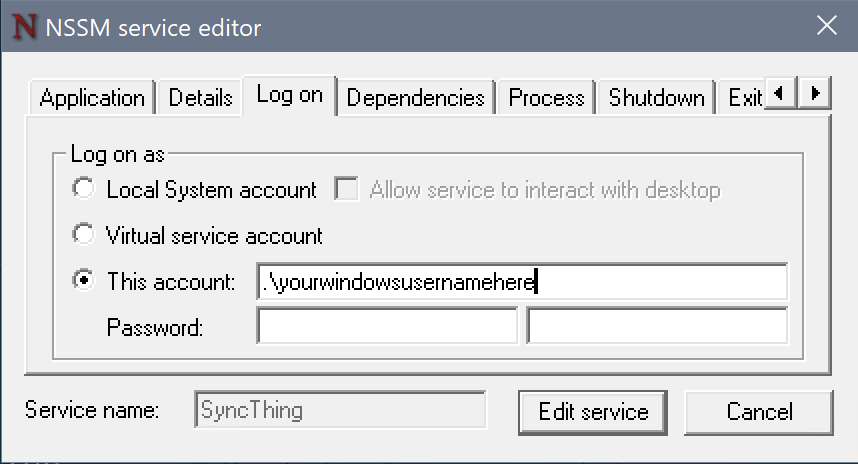

My third copy, on top of time machine, is syncthing “continuous file synchronization”, which is great. It's like being able to set up a load of open source CloudDrives but using your local network too.

My fourth copy is either github or bitbucket for code; and iDrive or OneDrive for documents and graphics.

My fifth copy will be scripted backups to Azure storage, which seems like the cheapest way to do cloud backups. Meanwhile I'm paying Apple or Microsoft each month for big enough cloud storage.