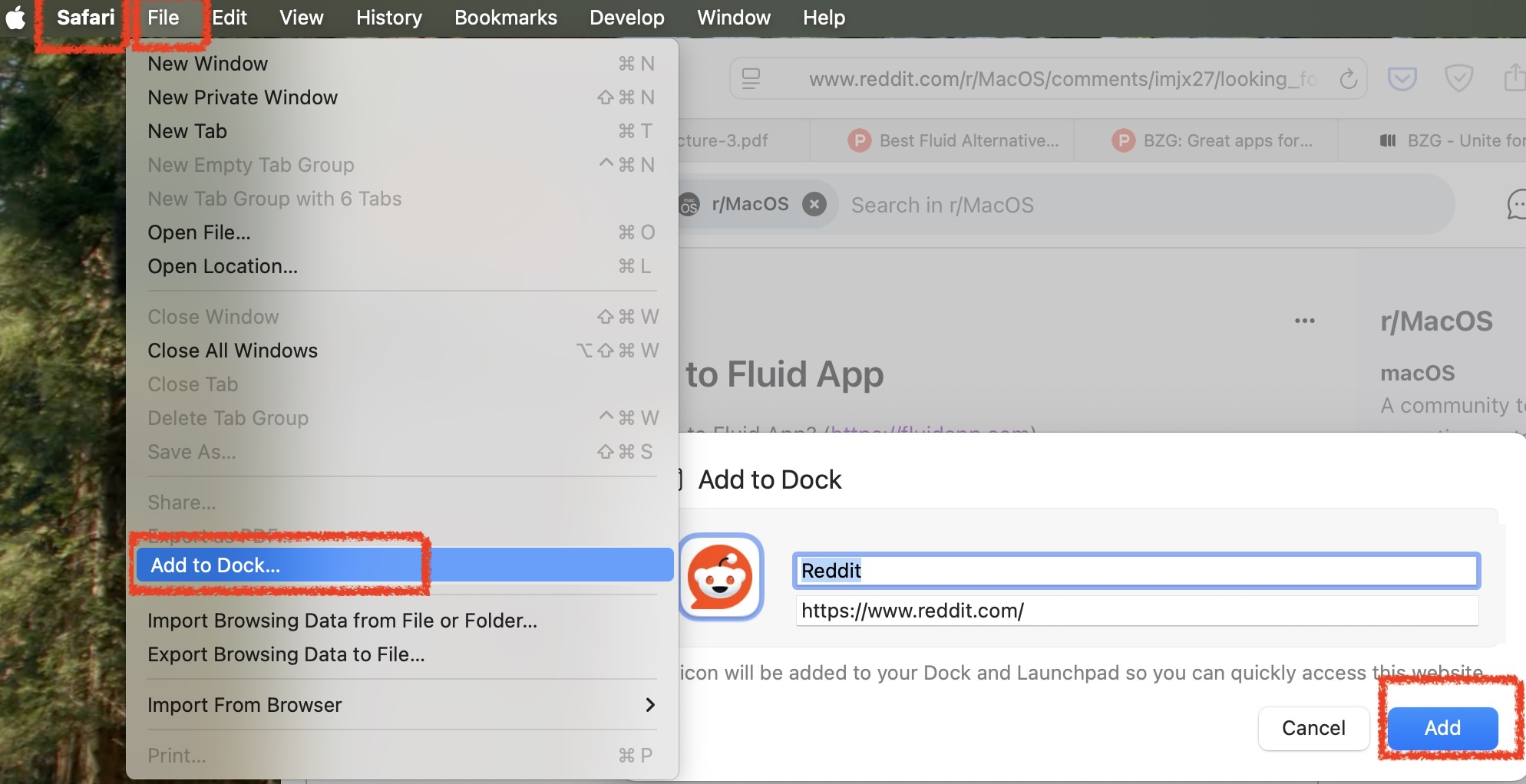

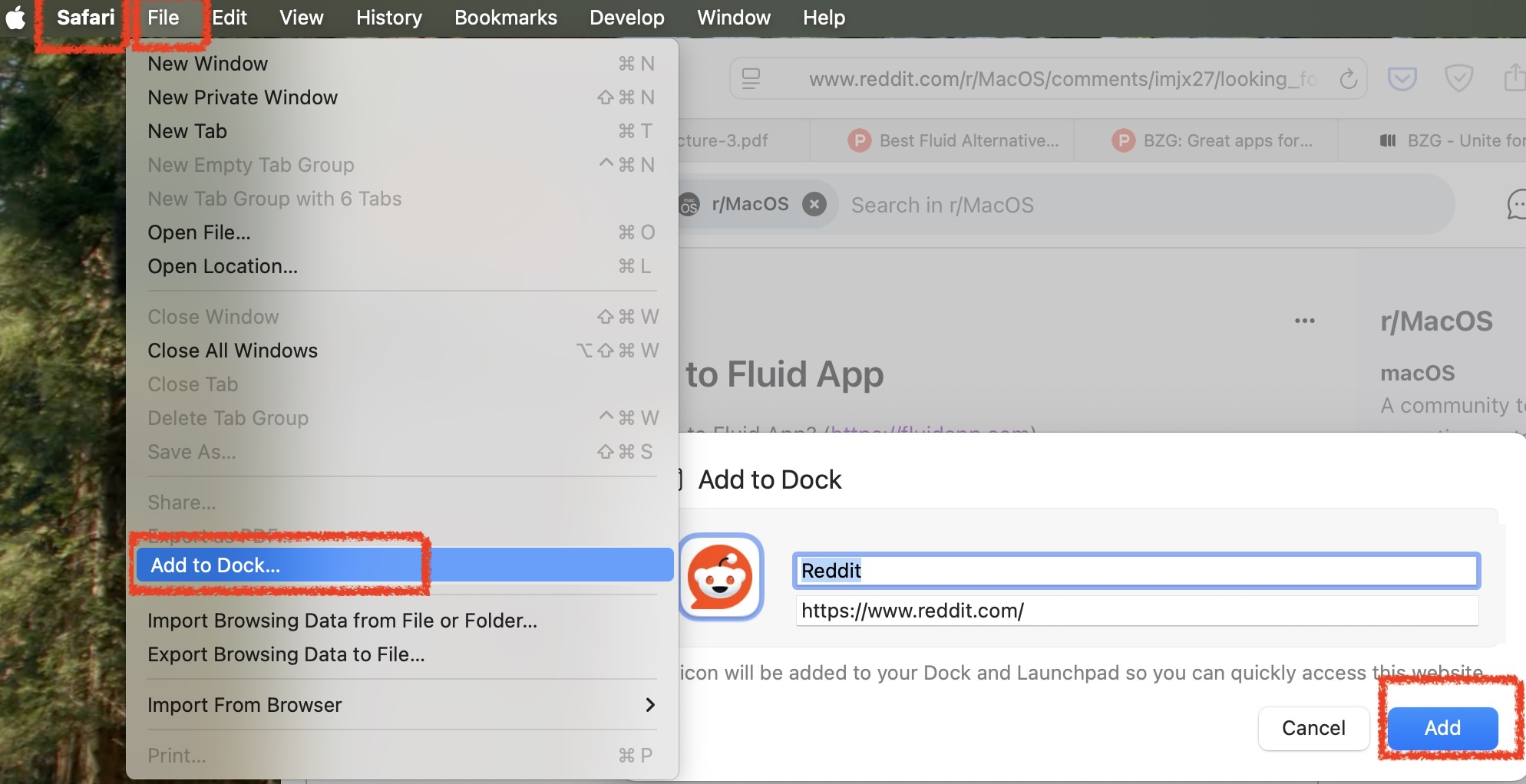

For MacOs users looking for a replacement for Fluid.app that runs on Apple Silicon, it's in the Safari File menu:

Like Fluid.app, it creates an application in your user's ~/Applications directory.

Coffee | Coding | Computers | Church | What does it all mean?

Mac OS X and Windows

For MacOs users looking for a replacement for Fluid.app that runs on Apple Silicon, it's in the Safari File menu:

Like Fluid.app, it creates an application in your user's ~/Applications directory.

You can find plenty of instructions on the interwebs for setting up time machine to a network share, even a Windows share.

What you can't easily find is, how to do it reliably.

I have some recommendations.

This is my first and biggest point. Dismounting the backup drive after each backup removes most of the reliability problem of network backup. Before doing this, I rarely got through 3 months without some kind of “the network share got dismounted uncleanly and now it won't mount until I run Disk First Aid on it”.

This is a lesson you can take from cloud computing. Both Microsoft and Amazon clouds treat 'at least 3 copies' as the basic level for storing data. That means you want at least 2 independent backup systems for anything on your own machine.

If you combine this thought with the standard “don't put all your eggs in one physical location” motto of backup, you realise that you need a cloud or offsite backup as well as your time machine backup. The simplest free solution for your third copy, if 5GB is enough, is to use iDrive or OneDrive.

This is optional, and certainly less important than the first two points but, running Disk First Aid or fsck doesn't always work. Sad but true. I typically got a “fsck can't repair it properly” incident about once a year. I had a growing stack of hard disks with a year's worth of backup each, all only mountable readonly.

DiskWarrior has so far been reliable in restoring broken volumes back to fully working state. NB as of 2021 DiskWarrior can't yet repair APFS volumes so stay with HFS+ volumes for your time machines.

Here are my script and cron table for mounting a TM drive from the network, requesting a backup, and dismounting the TM drive. It uses wakeonlan to wake the server from sleep, and ping to confirm it's up before trying to mount. It uses osascript to mount the volume because that deals with saving the network password in your keychain.

#! /usr/bin/env sh

#

# ------------------------------------------------------

# defineNamesAndPaths

smbServer=myServerName

smbServerfqdn=$smbServer.local

smbServerMacAddress='your-server-mac-address-here'

smbVolumeUrl="smb://$smbServerfqdn/D"

tmDiskImageName='TM2023.sparsebundle'

tmVolumeMountedAtPath='/Volumes/Time Machine Backups'

mountedAtMaybePath1="/Volumes/$smbServerfqdn/Backups"

mountedAtMaybePath2="/Volumes/D/Backups"

mountedAtMaybePath3=$(dirname $(find /Volumes -iname $tmDiskImageName -maxdepth 3 2>/dev/null | head -n 1) 2>/dev/null)

declare -a maybeMountedPaths=("$mountedAtMaybePath1/$tmDiskImageName" "$mountedAtMaybePath2/$tmDiskImageName" "$mountedAtMaybePath3/$tmDiskImageName")

#-------------------------------------------------------

# helpAndExit

if [[ "$1" == -h || "$1" == *help* ]] ; then

echo "$0

mount a time machine backup diskimage from the network and kick off a backup.

-unmount : unmount the time machine volume.

-fsck : attach the volume with -nomount and run fsck

Current settings:

Attach from : $smbVolumeUrl/$tmDiskImageName

Mount at : $tmVolumeMountedAtPath

This script is admin-editable. Edit the script to set smbServer url

and mac address, the diskimage name, and the mounted path.

These are the current settings in $0 :

smbServer=$smbServer

smbServerfqdn=$smbServerfqdn #Used for ping

smbServerMacAddress=$smbServerMacAddress #Used for wakeonlan if available

smbVolumeUrl=$smbVolumeUrl

mountedAtMaybePath1=$mountedAtMaybePath1

mountedAtMaybePath2=$mountedAtMaybePath2

tmDiskImageName=$tmDiskImageName

tmVolumeMountedAtPath=$tmVolumeMountedAtPath

"

exit

fi

#-----------------------------------------------------------

# cron jobs get a very truncated path and can't find ping, diskutil, hdiutil, tmutil ...

PATH="$PATH:/sbin:/usr/sbin:/usr/bin:/usr/local/bin"

echo '#-------------------------------------------------------'

date

echo "$0 $@"

#-------------------------------------------------------

# unmountAndExitIfRequested

if [[ "$1" == *unmount* ]] ; then

tmutil status

if [[ ! -d "$tmVolumeMountedAtPath" ]] ; then

echo "$tmVolumeMountedAtPath is already unmounted"

elif [ -n "$(tmutil status | grep 'Running = 0')" ] ; then

echo "unmounting ..."

/usr/sbin/diskutil unmount "$tmVolumeMountedAtPath"

else

echo "not unmounting $tmVolumeMountedAtPath because tm status says still running."

fi

exit

fi

# ---

#-------------------------------------------------------

# wakeAndConfirmPingableElseExit

#

# wakeonlan : I got mine from brew install – https://github.com/jpoliv/wakeonlan/blob/master/wakeonlan

# Otherwise try https://ddg.gg/bash%20script%20wakeonlan

if [[ -x $(which wakeonlan) ]] ; then wakeonlan $smbServerMacAddress ; fi

for tried in {1..50} ; do ping -c 1 -t 5 $smbServerfqdn 2>&1 && break ; done

if (( $tried == 50 )) ; then

echo "failed to ping $smbServerfqdn. Exiting."

exit

fi

sleep 10

if (( $tried > 2 )) ; then

echo "waiting in case it was a cold-ish start..."

sleep 10

fi

# ---

#-------------------------------------------------------

# mount Network Volume but nomount sparsebundle and run fsck

if [[ "$1" == *fsck* ]] ; then

echo 'mounting ...'

osascript -e 'mount volume "'$smbVolumeUrl'"'

echo 'Trying locations to attach ...'

ok=0

for tryLocation in "${maybeMountedPaths[@]}"

do

echo trying to mount from $tryLocation …

hdiutil attach $tryLocation -nomount && ok=1 && break

done

if [ $ok -eq 1 ] ; then

id=$(diskutil list | grep "Time Machine Backups" | grep -oE "disk\d+s\d+")

echo Running fsk on /dev/$id

fsck_hfs -y /dev/$id || exit

fi

fi

# --

#-------------------------------------------------------

# mountAndAttach

echo 'mounting ...'

osascript -e 'mount volume "'$smbVolumeUrl'"'

echo 'Trying locations to attach ...'

ok=0

for tryLocation in "${maybeMountedPaths[@]}"

do

if [ -d $tryLocation ] ; then

echo trying to mount from $tryLocation …

hdiutil attach $tryLocation && ok=1 && echo "attached OK" && break

else

echo not found at $tryLocation

fi

done

[ $ok -eq 0 ] && \

echo "failed to mount $tmDiskImageName at $mountedAtMaybePath1 or $mountedAtMaybePath2 or $mountedAtMaybePath3"

# ---

#-------------------------------------------------------

# requestTimeMachineBackup

echo 'requesting backup.'

tmutil startbackup --auto

echo 'Done.'

# ---And the crontab lines:

5 10-23/4 * * * /Users/chris/Applications/tmbackupnow.sh >> /Users/chris/Applications/Logs/tmbackupnow.log 2>&1

20,35 10-23/4 * * * /Users/chris/Applications/tmbackupnow.sh -unmount >> /Users/chris/Applications/Logs/tmbackupnow.log 2>&1This schedule does 3 or 4 backups per working day on top of the local snapshots that time machine does anyway. Possibly this is overkill if you are squeezed for disk space. It tries to mount the TM machine and kick off a backup at 5 minutes past 10am,2pm,6pm,10pm and then tries to dismount the backup disk 15 minutes later and again another 15 minutes later. Adjust the timing to the size & speed of your backup.

My third copy, on top of time machine, is syncthing “continuous file synchronization”, which is great. It's like being able to set up a load of open source CloudDrives but using your local network too.

My fourth copy is either github or bitbucket for code; and iDrive or OneDrive for documents and graphics.

My fifth copy will be scripted backups to Azure storage, which seems like the cheapest way to do cloud backups. Meanwhile I'm paying Apple or Microsoft each month for big enough cloud storage.

… is a necessary rule of thumb for computer-based knowledge & design workers. But add the lesson of cloud computing:

Backups: If you don't have 3 copies, you aren't serious.

The standard redundancy for cheap cloud storage options is 3 copies. Anything less is reduced redundancy, sold at discount. You should have at least 2 backups, for instance both a home backup disk and a cloud drive or repo.

A big win, when you plan for multiple copies, is that you no longer need any of them to be highly reliable. What matters more is, how fast can you make another copy if one copy goes down?

Mono goes a long way in running code written for .Net on Windows. It is all very much easier if you either start with cross-platform in mind, or if you move to .Net Core; but even for existing .Net Framework projects mono can run runs most things including Asp.Net.

Here's my checklist from a couple of years of opening .Net Framework solution files on a Mac and finding they don't build first time.

Most of these require you to edit the .csproj file to make it cross-platform, so a basic grasp of msbuild is very helpful.

For AspNet: inside the PropertyGroup section near the top of the csproj file, add an element:

<WebProjectOutputDir Condition="$(WebProjectOutputDir) == '' AND $(OS) == 'Unix' ">bin/</WebProjectOutputDir>Use this if you get a 'The “KillProcess” task was not given a value for the required parameter “ImagePath” (MSB4044)' error message; or if the build output shows you are trying to create files in an top-level absolute /bin/ path.

For AspNet: Add Condition="'$OS'!='Unix'" to the reference to Microsoft.Web.Infrastructure.dll AND delete the file from the website bin directory.

<Reference Condition="'$OS'!='Unix'" Include="Microsoft.Web.Infrastructure, Version=1.0.0.0, Culture=neutral, PublicKeyToken=31bf3856ad364e35, processorArchitecture=MSIL">

<Private>True</Private>

<HintPath>..\packages\Microsoft.Web.Infrastructure.1.0.0.0\lib\net40\Microsoft.Web.Infrastructure.dll</HintPath>

</Reference>For all project types—but, only if you need to use the netCore dotnet build tooling to build an NetFramework project on unix. mono's msbuild does not need this. Add this section somewhere in the csproj file (I put it right at the bottom), to resolve NetFramework4 reference paths:

<PropertyGroup Condition="$(TargetFramework.StartsWith('net4')) and '$(OS)' == 'Unix'">

<!-- When compiling .NET SDK 2.0 projects targeting .NET 4.x on Mono using 'dotnet build' you -->

<!-- have to teach MSBuild where the Mono copy of the reference asssemblies is -->

<!-- Look in the standard install locations -->

<BaseFrameworkPathOverrideForMono Condition="'$(BaseFrameworkPathOverrideForMono)' == '' AND EXISTS('/Library/Frameworks/Mono.framework/Versions/Current/lib/mono')">/Library/Frameworks/Mono.framework/Versions/Current/lib/mono</BaseFrameworkPathOverrideForMono>

<BaseFrameworkPathOverrideForMono Condition="'$(BaseFrameworkPathOverrideForMono)' == '' AND EXISTS('/usr/lib/mono')">/usr/lib/mono</BaseFrameworkPathOverrideForMono>

<BaseFrameworkPathOverrideForMono Condition="'$(BaseFrameworkPathOverrideForMono)' == '' AND EXISTS('/usr/local/lib/mono')">/usr/local/lib/mono</BaseFrameworkPathOverrideForMono>

<!-- If we found Mono reference assemblies, then use them -->

<FrameworkPathOverride Condition="'$(BaseFrameworkPathOverrideForMono)' != '' AND '$(TargetFramework)' == 'net40'">$(BaseFrameworkPathOverrideForMono)/4.0-api</FrameworkPathOverride>

<FrameworkPathOverride Condition="'$(BaseFrameworkPathOverrideForMono)' != '' AND '$(TargetFramework)' == 'net45'">$(BaseFrameworkPathOverrideForMono)/4.5-api</FrameworkPathOverride>

<FrameworkPathOverride Condition="'$(BaseFrameworkPathOverrideForMono)' != '' AND '$(TargetFramework)' == 'net451'">$(BaseFrameworkPathOverrideForMono)/4.5.1-api</FrameworkPathOverride>

<FrameworkPathOverride Condition="'$(BaseFrameworkPathOverrideForMono)' != '' AND '$(TargetFramework)' == 'net452'">$(BaseFrameworkPathOverrideForMono)/4.5.2-api</FrameworkPathOverride>

<FrameworkPathOverride Condition="'$(BaseFrameworkPathOverrideForMono)' != '' AND '$(TargetFramework)' == 'net46'">$(BaseFrameworkPathOverrideForMono)/4.6-api</FrameworkPathOverride>

<FrameworkPathOverride Condition="'$(BaseFrameworkPathOverrideForMono)' != '' AND '$(TargetFramework)' == 'net461'">$(BaseFrameworkPathOverrideForMono)/4.6.1-api</FrameworkPathOverride>

<FrameworkPathOverride Condition="'$(BaseFrameworkPathOverrideForMono)' != '' AND '$(TargetFramework)' == 'net462'">$(BaseFrameworkPathOverrideForMono)/4.6.2-api</FrameworkPathOverride>

<FrameworkPathOverride Condition="'$(BaseFrameworkPathOverrideForMono)' != '' AND '$(TargetFramework)' == 'net47'">$(BaseFrameworkPathOverrideForMono)/4.7-api</FrameworkPathOverride>

<FrameworkPathOverride Condition="'$(BaseFrameworkPathOverrideForMono)' != '' AND '$(TargetFramework)' == 'net471'">$(BaseFrameworkPathOverrideForMono)/4.7.1-api</FrameworkPathOverride>

<FrameworkPathOverride Condition="'$(BaseFrameworkPathOverrideForMono)' != '' AND '$(TargetFramework)' == 'net472'">$(BaseFrameworkPathOverrideForMono)/4.7.2-api</FrameworkPathOverride>

<EnableFrameworkPathOverride Condition="'$(BaseFrameworkPathOverrideForMono)' != ''">true</EnableFrameworkPathOverride>

<!-- Add the Facades directory. Not sure how else to do this. Necessary at least for .NET 4.5 -->

<AssemblySearchPaths Condition="'$(BaseFrameworkPathOverrideForMono)' != ''">$(FrameworkPathOverride)/Facades;$(AssemblySearchPaths)</AssemblySearchPaths>

</PropertyGroup>For projects that have lived through C# evolution from C# 5 to C# 7: You may need to remove duplicate references to e.g. System.ValueTuple. Add Condition="'$(OS)' != 'Unix'" to the reference. This applies to Types that MS put on NuGet.org during the evolution. idk why msbuild builds without complain on Windows but not on Unices.

Example:

<Reference Condition="'$(OS)' != 'Unix'" Include="System.ValueTuple, Version=4.0.1.1, Culture=neutral, PublicKeyToken=cc7b13ffcd2ddd51, processorArchitecture=MSIL">

<HintPath>..\packages\System.ValueTuple.4.3.1\lib\netstandard1.0\System.ValueTuple.dll</HintPath>

</Reference>For References to Microsoft.VisualStudio.TestTools.UnitTesting: Add a nuget reference to MSTEST V2 from nuget.org and make it conditional on the OS

<ItemGroup Condition="'$(OS)' == 'Unix'">

<Reference Include="MSTest.TestFramework" Version="2.1.1">

<HintPath>..\packages\MSTest.TestFramework.2.1.2\lib\net45\Microsoft.VisualStudio.TestPlatform.TestFramework.dll</HintPath>

</Reference>

<Reference Include="coverlet.collector" Version="1.3.0" >

<HintPath>..\packages\coverlet.collector.1.3.0\build\netstandard1.0\coverlet.collector.dll</HintPath>

</Reference>

</ItemGroup>Note this will only get you to a successful build. To run the tests on unix you then have to download and build https://github.com/microsoft/vstest and run it with e.g.

mono ~/Source/Repos/vstest/artifacts/Debug/net451/ubuntu.18.04-x64/vstest.console.exe --TestAdapterPath:~/Source/Repos/vstest/test/Microsoft.TestPlatform.Common.UnitTests/bin/Debug/net451/ MyTestUnitTestProjectName.dll.

Case Sensitivity & mis-cased references

Windows programmers are used to a case-insensitive filesystem. So if code or config contains references to files, you may need to correct mismatched casing. Usually a 'FileNotFoundException' will tell you if you have this problem.

The Registry, and other Permissions

See this post for more: https://www.cafe-encounter.net/p1510/asp-net-mvc4-net-framework-version-4-5-c-razor-template-for-mono-on-mac-and-linux

It is helpful to be somewhat familiar with microsoft docs on MSBuild Concepts since msbuild will be issuing most of your build errors. If you have installed mono then you can run msbuild from the command line with extra diagnostics e.g.

msbuild -v:d >> build.logyou can also run web applications from the command just by running

xspfrom the project directory.

For reasons best not examined too closely I switch between between Mac and PC which, since I earn my crust largely with .Net development, means switching between Visual Studio and MS.Net and MonoDevelop with Mono.

Mono is very impressive, it is not at all a half hearted effort, and it does some stuff that MS haven't done. But when switching environments, there's always the occasional gotcha. Here are some that have got me, and some solutions.