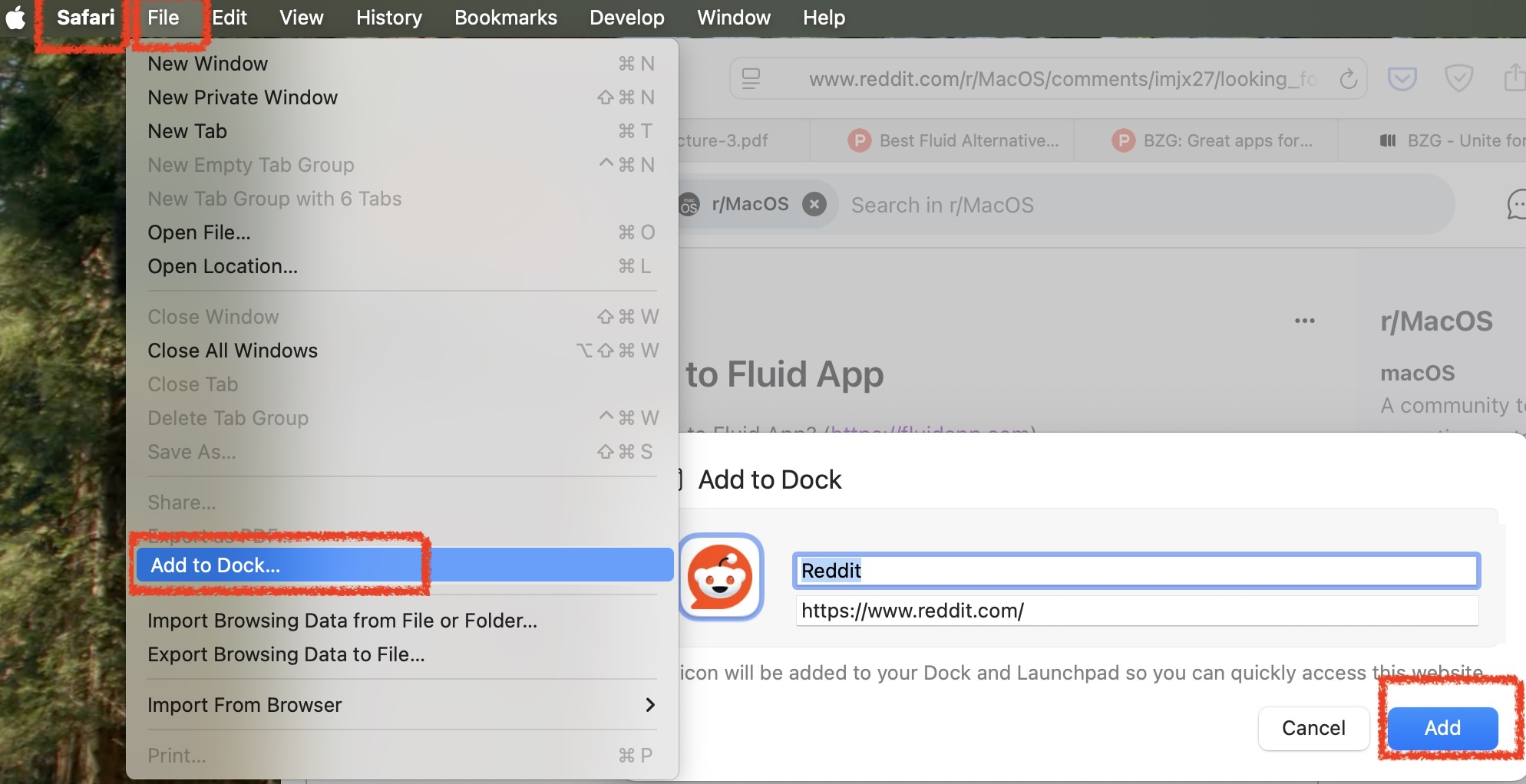

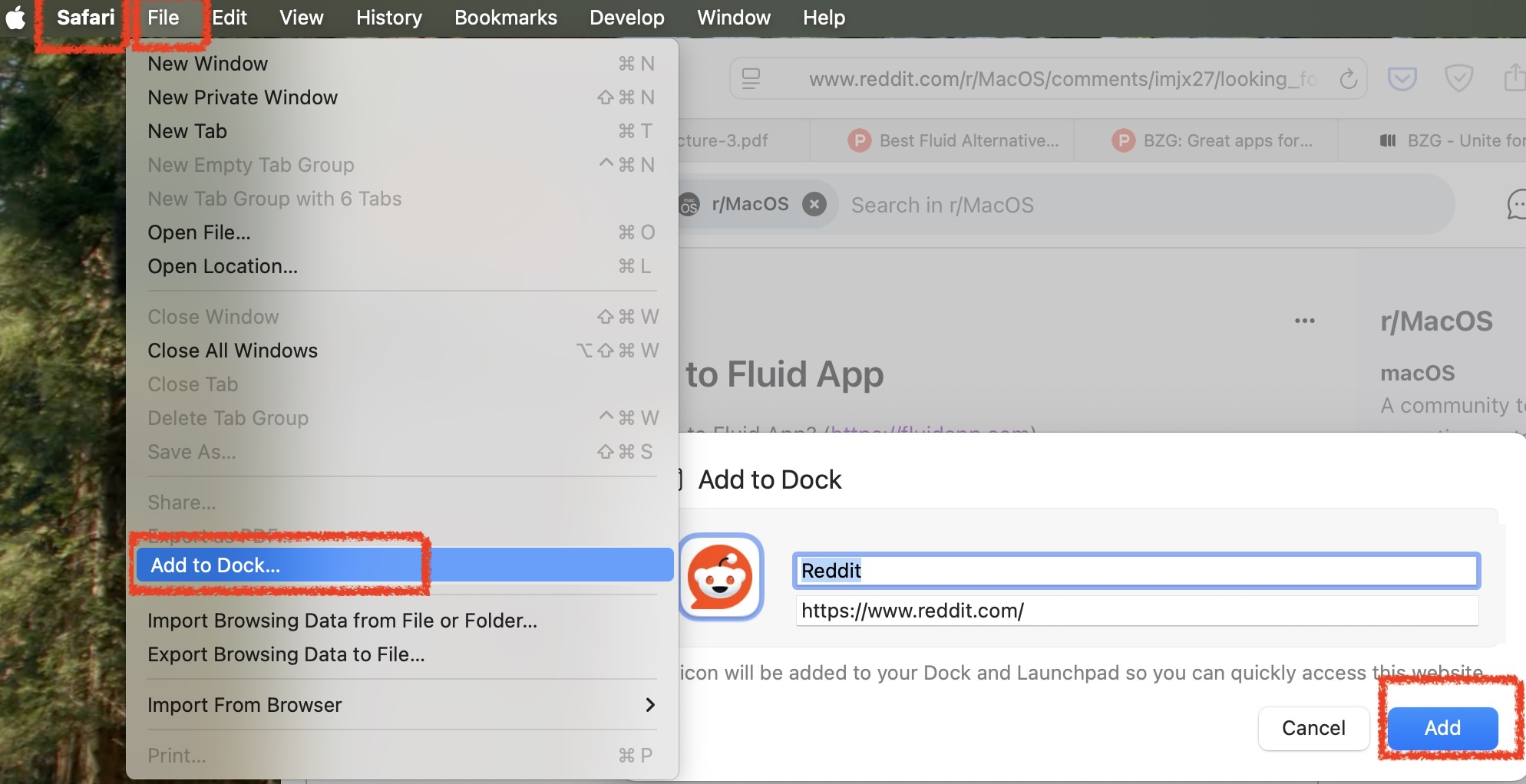

For MacOs users looking for a replacement for Fluid.app that runs on Apple Silicon, it's in the Safari File menu:

Like Fluid.app, it creates an application in your user's ~/Applications directory.

Coffee | Coding | Computers | Church | What does it all mean?

For MacOs users looking for a replacement for Fluid.app that runs on Apple Silicon, it's in the Safari File menu:

Like Fluid.app, it creates an application in your user's ~/Applications directory.